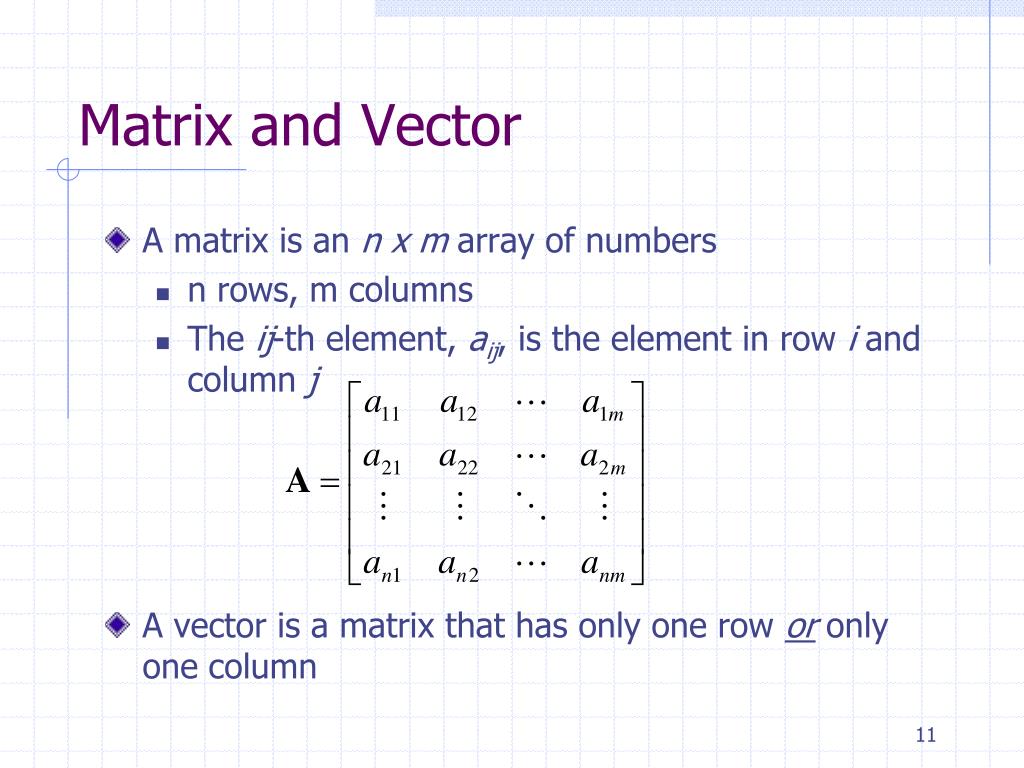

The computation below is the same as in the above, only in vectorized form.) (If unwrapping the matrix-vector product seems too complex, I got you. Make a mental note of this, because it is important. Moreover, we can look at a matrix-vector product as a linear combination of the column vectors. The images of basis vectors form the columns of the matrix. You know, those that stretch, skew, rotate, flip, or otherwise linearly distort the space. Matrices represent linear transformations. This sounds a bit algebra-y, so let's see this idea in geometric terms. Similarly, multiplying A A A with a (column) vector whose second component is 1 1 1 and the rest is 0 0 0 yields the second column of A A A.īy the same logic, we conclude that A A A times e k e_k e k equals the k k k-th column of A A A. Turns out that the product of A A A and e 1 e_1 e 1 is the first column of A A A. Let's name this special vector e 1 e_1 e 1 . Now, let's look at a special case: multiplying the matrix A A A with a (column) vector whose first component is 1 1 1, and the rest is 0 0 0. The element in the i i i-th row and j j j-th column of A B AB A B is the dot product of A A A's i i i-th row and B B B's j j j-th column. Here is a quick visualization before the technical details.

Not the easiest (or most pleasant) to look at. This is how the product of A A A and B B B is given. Yet, there is a stunningly simple explanation behind it.įirst, the raw definition. Matrix multiplication is not easy to understand.Įven looking at the definition used to make me sweat, let alone trying to comprehend the pattern.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed